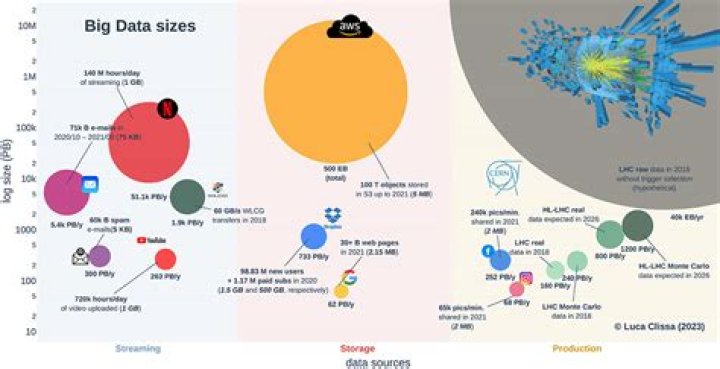

What is the size of big data

Isabella Bartlett

Isabella Bartlett Big Data, while impossible to define specifically, typically refers to data storage amounts in excesses of one terabyte(TB). Big Data has three main characteristics: Volume (amount of data), Velocity (speed of data in and out), Variety (range of data types and sources).

What is the minimum size that qualifies as big data?

However, the original definition of big data suggests that organisations can only develop a big data strategy if they have vast volumes, terabytes or more, of data. IDC even defines big data projects as projects that contain a minimum of 100 terabyte of collected data.

Can I say 1024 GB of data as big data?

“Big data” is a term relative to the available computing and storage power on the market — so in 1999, one gigabyte (1 GB) was considered big data. Today, it may consist of petabytes (1,024 terabytes) or exabytes (1,024 petabytes) of information, including billions or even trillions of records from millions of people.

What data size makes data big data?

Big data refers to the large, diverse sets of information that grow at ever-increasing rates. It encompasses the volume of information, the velocity or speed at which it is created and collected, and the variety or scope of the data points being covered (known as the “three v’s” of big data).What qualifies big data?

The definition of big data is data that contains greater variety, arriving in increasing volumes and with more velocity. … Put simply, big data is larger, more complex data sets, especially from new data sources. These data sets are so voluminous that traditional data processing software just can’t manage them.

What is the use of big data?

Importance of big data Companies use big data in their systems to improve operations, provide better customer service, create personalized marketing campaigns and take other actions that, ultimately, can increase revenue and profits.

What is an example of big data?

Bigdata is a term used to describe a collection of data that is huge in size and yet growing exponentially with time. Big Data analytics examples includes stock exchanges, social media sites, jet engines, etc.

What is petabyte?

An extremely large unit of digital data, one Petabyte is equal to 1,000 Terabytes. Some estimates hold that a Petabyte is the equivalent of 20 million tall filing cabinets or 500 billion pages of standard printed text.What are the 5 Vs of big data?

The 5 V’s of big data (velocity, volume, value, variety and veracity) are the five main and innate characteristics of big data.

Is 1GB data enough for a day?1GB (or 1000MB) is about the minimum data allowance you’re likely to want, as with that you could browse the web and check email for up to around 40 minutes per day.

Article first time published onHow much data do we consume daily?

The average American consumes about 34 gigabytes of data and information each day — an increase of about 350 percent over nearly three decades — according to a report published Wednesday by researchers at the University of California, San Diego.

How many terabytes is big data?

Big Data, while impossible to define specifically, typically refers to data storage amounts in excesses of one terabyte(TB). Big Data has three main characteristics: Volume (amount of data), Velocity (speed of data in and out), Variety (range of data types and sources).

What is the difference between big data and data?

Any definition is a bit circular, as “Big” data is still data of course. Data is a set of qualitative or quantitative variables – it can be structured or unstructured, machine readable or not, digital or analogue, personal or not. … Hence, BIG DATA, is not just “more” data.

What is big data small data?

It is data in a volume and format that makes it accessible, informative and actionable. The term “big data” is about machines and “small data” is about people. This is to say that eyewitness observations or five pieces of related data could be small data. Small data is what we used to think of as data.

What is big data PDF?

The term “Big Data” refers to the heterogeneous mass of digital data produced by companies and individuals whose characteristics (large volume, different forms, speed of processing) require specific and increasingly sophisticated computer storage and analysis tools.

What is large data set?

What are Large Datasets? For the purposes of this guide, these are sets of data that may be from large surveys or studies and contain raw data, microdata (information on individual respondents), or all variables for export and manipulation.

What are the 4 Vs of big data?

The 4 V’s of Big Data in infographics IBM data scientists break big data into four dimensions: volume, variety, velocity and veracity. This infographic explains and gives examples of each.

Why is big data different?

Many big-data applications use external information that is not proprietary, such as social network modeling and sentiment analysis. Moreover, big data analytics are dependent on extensive storage capacity and processing power, requiring a flexible grid that can be reconfigured for different needs.

What are the 7 V's of big data?

The 7Vs of Big Data: Volume, Velocity, Variety, Variability, Veracity, Value, and Visibility.

What are the 3 V's of big data?

Dubbed the three Vs; volume, velocity, and variety, these are key to understanding how we can measure big data and just how very different ‘big data’ is to old fashioned data. The most obvious one is where we’ll start.

What is the most important V of big data?

Veracity: The Most Important “V” of Big Data.

How much is a Yoda bite?

How big is a yottabyte? A yottabyte is the largest unit approved as a standard size by the International System of Units (SI). The yottabyte is about 1 septillion bytes — or, as an integer, 1,000,000,000,000,000,000,000,000 bytes.

How many petabytes is the Internet?

Science Focus estimates that Google, Amazon, Microsoft and Facebook collectively store at least 1,200 petabytes. (That’s not even including well-known storage sites like Dropbox.) A thousand gigabytes equals a terabyte – or 1 million megabytes. So, 1,200 petabytes is 1.2 million terabytes.

How much is YouTube storage capacity?

So the answer to your question, YouTube currently owns around 400,000TB of storage.

What is 500MB of data?

A 500MB data plan will allow you to browse the internet for around 6 hours, to stream 100 songs or to watch 1 hour of standard-definition video. Nowadays, the key difference between mobile phone price plans is how many gigabytes of data it comes with.

Is 1.5 GB of data a lot?

Listen to almost 35 hours of music for 1GB of data. … At that rate, you’d burn through close to 1.5GB in an hour. Netflix. Stream about 1 hour of standard-definition video per gigabyte.

What is 6GB data?

A 6GB data plan will allow you to browse the internet for around 72 hours, to stream 1,200 songs or to watch 12 hours of standard-definition video.

What is the size of the Internet?

Eric Schmidt, the CEO of Google, the world’s largest index of the Internet, estimated the size at roughly 5 million terabytes of data. That’s over 5 billion gigabytes of data, or 5 trillion megabytes.

How fast is data growing?

The total amount of data created, captured, copied, and consumed globally is forecast to increase rapidly, reaching 64.2 zettabytes in 2020. Over the next five years up to 2025, global data creation is projected to grow to more than 180 zettabytes. In 2020, the amount of data created and replicated reached a new high.

How much data is in the cloud?

According to recent research by Nasuni, there is over 1 Exabyte of data stored in the cloud, or: 1024 Petabytes of data. 1,073,741,824 Gigabytes of data.

What big data is and is not?

Big Data is not a function of a single data set; it is a function of multiple data sets coming from multiple sources. Running analytics across a massive data set is BI on steroids; running it against multiple, disparate data sets is Big Data.