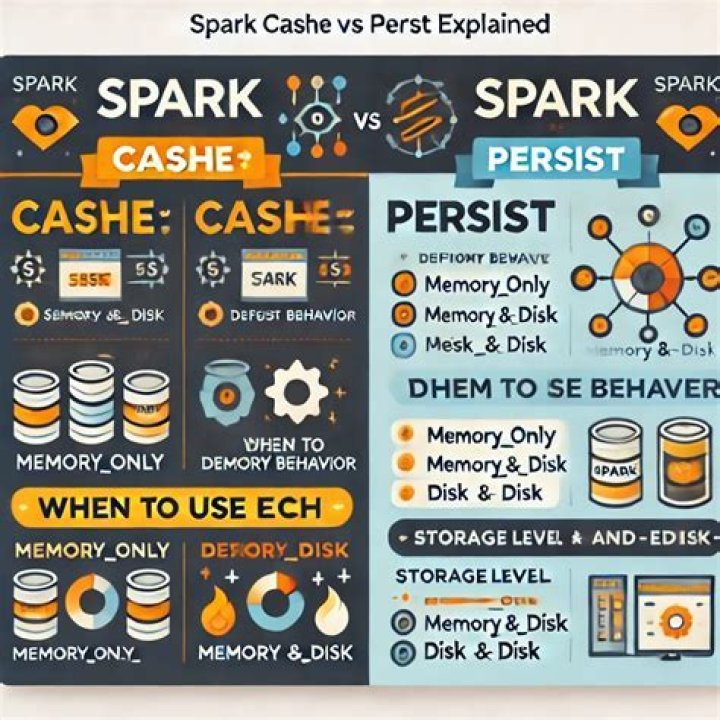

What is the difference between persist () and Cache ()

Andrew White

Andrew White The difference between cache() and persist() is that using cache() the default storage level is MEMORY_ONLY while using persist() we can use various storage levels (described below). … Because, when we persist RDD each node stores any partition of it that it computes in memory and makes it reusable for future use.

What is the difference between cache () and persist ()?

The difference between cache() and persist() is that using cache() the default storage level is MEMORY_ONLY while using persist() we can use various storage levels (described below). … Because, when we persist RDD each node stores any partition of it that it computes in memory and makes it reusable for future use.

What is persist PySpark?

Introduction to PySpark Persist. PySpark Persist is an optimization technique that is used in the PySpark data model for data modeling and optimizing the data frame model in PySpark. It helps in storing the partial results in memory that can be used further for transformation in the PySpark session.

What is cache persist?

Caching or persistence are optimization techniques for (iterative and interactive) Spark computations. They help saving interim partial results so they can be reused in subsequent stages. These interim results as RDD s are thus kept in memory (default) or more solid storage like disk and/or replicated.What is the difference between broadcast and cache in Spark?

Caching is a key tool for iterative algorithms and fast interactive use. Broadcast variables allow the programmer to keep a read-only variable cached on each machine rather than shipping a copy of it with tasks. They can be used, for example, to give every node a copy of a large input dataset in an efficient manner.

How does persist work in Spark?

The actual persistence takes place during the first (1) action call on the spark RDD. Spark provides multiple storage options like memory or disk. That helps to persist the data as well as replication levels. When we apply persist method, RDDs as result can be stored in different storage levels.

What is persist and Unpersist in Spark?

When we persist or cache an RDD in Spark it holds some memory(RAM) on the machine or the cluster. … Once we are sure we no longer need the object in Spark’s memory for any iterative process optimizations we can call the method unpersist(). Once this is done we can again check the Storage tab in Spark’s UI.

Which method is used in Pyspark to persist RDD in default storage?

There is an availability of different storage levels which are used to store persisted RDDs. Use these levels by passing a StorageLevel object (Scala, Java, Python) to persist(). However, the cache() method is used for the default storage level, which is StorageLevel.What is the difference between spark checkpoint and persist to a disk?

Checkpointing stores the RDD in HDFS. It deletes the lineage which created it. When we persist RDD with DISK_ONLY storage level the RDD gets stored in a location where the subsequent use of that RDD will not reach that point in recomputing the lineage.

How do you persist RDD in spark?You can mark an RDD to be persisted using the persist() or cache() methods on it. The first time it is computed in an action, it will be kept in memory on the nodes.

Article first time published onWhen should I use persist in Spark?

Using cache() and persist() methods, Spark provides an optimization mechanism to store the intermediate computation of a Spark DataFrame so they can be reused in subsequent actions. When you persist a dataset, each node stores its partitioned data in memory and reuses them in other actions on that dataset.

How do you persist a table in Spark?

Persistent tables will still exist even after your Spark program has restarted, as long as you maintain your connection to the same metastore. A DataFrame for a persistent table can be created by calling the table method on a SparkSession with the name of the table.

What is the advantage of persisting data in serialized format?

As serialized data This approach maybe slower since serialized data is more CPU-intensive to read than deserializeddata; however, it is often more memory efficient, since it allows the userto choose a more efficient representation.

What is cache in spark?

Caching RDDs in Spark: It is one mechanism to speed up applications that access the same RDD multiple times. … The difference among them is that cache() will cache the RDD into memory, whereas persist(level) can cache in memory, on disk, or off-heap memory according to the caching strategy specified by level.

When should I broadcast in spark?

- If you have huge array that is accessed from Spark Closures, for example some reference data, this array will be shipped to each spark node with closure. …

- And some RDD. …

- In this case array will be shipped with closure each time. …

- and with broadcast you’ll get huge performance benefit.

How broadcast variables improve performance?

Using broadcast variables can improve performance by reducing the amount of network traffic and data serialization required to execute your Spark application.

How do I cache data in spark?

- DISK_ONLY: Persist data on disk only in serialized format.

- MEMORY_ONLY: Persist data in memory only in deserialized format.

- MEMORY_AND_DISK: Persist data in memory and if enough memory is not available evicted blocks will be stored on disk.

- OFF_HEAP: Data is persisted in off-heap memory.

How do I clear Pyspark cache?

you can use sqlContext. clearCache() or if you using the cache() method to persist RDDs? cache() just calls persist(), so to remove the cache for an RDD, call unpersist(). Spark automatically monitors cache usage on each node and drops out old data partitions in a least-recently-used (LRU) fashion.

What is the difference between MAP and flatMap in spark?

As per the definition, difference between map and flatMap is: map : It returns a new RDD by applying given function to each element of the RDD. Function in map returns only one item. flatMap : Similar to map , it returns a new RDD by applying a function to each element of the RDD, but output is flattened.

How do you cache a DataFrame in Pyspark?

- When you cache a DataFrame create a new variable for it cachedDF = df. cache(). …

- Unpersist the DataFrame after it is no longer needed using cachedDF. unpersist() . …

- Before you cache, make sure you are caching only what you will need in your queries. …

- Use the caching only if it makes sense.

Does spark cache automatically?

FeatureDelta cacheApache Spark cacheTriggeredAutomatically, on the first read (if cache is enabled).Manually, requires code changes.

Can we trigger automated cleanup in spark?

Answer: Yes, we can trigger automated clean-ups in Spark to handle the accumulated metadata. It can be done by setting the parameters, namely, “spark.

What is the function of the map () in spark?

Spark Map function takes one element as input process it according to custom code (specified by the developer) and returns one element at a time. Map transforms an RDD of length N into another RDD of length N. The input and output RDDs will typically have the same number of records.

What is a spark checkpoint?

Checkpointing is actually a feature of Spark Core (that Spark SQL uses for distributed computations) that allows a driver to be restarted on failure with previously computed state of a distributed computation described as an RDD .

What is spark context?

A SparkContext represents the connection to a Spark cluster, and can be used to create RDDs, accumulators and broadcast variables on that cluster. Only one SparkContext should be active per JVM.

What is cache () in Pyspark?

In Spark, there are two function calls for caching an RDD: cache() and persist(level: StorageLevel). The difference among them is that cache() will cache the RDD into memory, whereas persist(level) can cache in memory, on disk, or off-heap memory according to the caching strategy specified by level.

What is the difference between RDD and DataFrame in Spark?

3.2. RDD – RDD is a distributed collection of data elements spread across many machines in the cluster. RDDs are a set of Java or Scala objects representing data. DataFrame – A DataFrame is a distributed collection of data organized into named columns. It is conceptually equal to a table in a relational database.

What are the different levels of persistence in Spark?

- MEMORY_ONLY.

- MEMORY_ONLY_SER.

- MEMORY_AND_DISK.

- MEMORY_AND_DISK_SER, DISK_ONLY.

- OFF_HEAP.

How do I cache RDD?

If it is executor’s memory, How Spark identifies which executor has the data. @RamanaUppala the executor memory is used. The fraction of executor memory used for caching is controlled by the config spark. storage.

What is catalyst Optimizer in spark?

Back to glossary At the core of Spark SQL is the Catalyst optimizer, which leverages advanced programming language features (e.g. Scala’s pattern matching and quasi quotes) in a novel way to build an extensible query optimizer. Easily add new optimization techniques and features to Spark SQL. …

Is RDD a memory?

RDD is stored as deserialized JAVA object in JVM. If the full RDD does not fit in memory, then instead of recomputing it every time when it is needed, the remaining partition is stored on disk. Stores RDDs as serialized JAVA object. It stores one-byte array per partition.